March in TigerLand

Dear friends,

We hope you had a majestic March. Last month, we landed a major update to the TigerBeetle Client API, improved performance and liveness, and saw TigerBeetle used as a benchmark workload for Linux filesystems. We took our love for speed to the track, solved a static allocation puzzle, and put TigerBeetle to the test—processing 1 trillion transactions…

And we announced the 4th Systems Distributed conference in Boston!

Let’s go!

The more you leave out, the more you highlight what you leave

in.

Henry Green

API design is a form of storytelling. Authors of both APIs and novels state their intent through unfolding actions and outcomes, deliberately adding or omitting elements for the best user/reader experience. And we all know, the greatest “stories” are told over and over again!

So, last month, we worked to improve the TigerBeetle Client API.

Previously, people wondered “what happened to our hero” because

the TigerBeetle cluster returned result codes for failed events, but an

empty response for the happy path! While returning zero bytes

instead of a result code for OK was efficient in saving

memory and network bandwidth, result codes alone couldn’t tell the whole

story, as a fundamental piece of information was left out: the timestamp

when the event (either a success or a failure) was processed by

the cluster…

With release 0.17.0, TigerBeetle’s new Client

API exposes the strict serializable timestamp

of each event processed by the TigerBeetle cluster through the create_accounts

and create_transfers

operations. With this new result, applications can learn the resulting

global order of events across workers, without additional

roundtrips.

Idempotency checks are easier to handle, since both the

createdandexistsresults return the same commit timestamp from the cluster. Because every event, not only successful ones, carries its timestamp, the result composes naturally with queries, helping answer questions like “why did a transfer withexceeds_debitsfail?” by querying point-in-time balances at the rejectiontimestamp.We implemented various improvements to the language clients for more idiomatic APIs and a better developer experience.

A race condition in the underlying

tb_clientimplementation was fixed, unifying how eviction and shutdown are handled.Applications using

0.16.xwill require updates to move to >=0.17.0. Refer to this complete list of changes and guidance on code migration for each supported programming language. And note, the cluster will continue to support clients as old as0.16.4for a transition period.

TigerBeetle’s metrics were improved:

The

client_request_round_tripmetric was fixed, adjusting the resolution to microseconds to best fit a 32-bit integer.Times for

reservedVSR operations are now recorded, giving visibility into the latency of client registrations (the first request a client sends to the cluster) using theregisteroperation.New metrics tracking the minimum and maximum client releases seen by the TigerBeetle cluster were added.

We added a new metric to track time spent on CPU work, specifically callbacks.

The CDC Job

received new command line arguments to help diagnose connectivity

issues. Users can now specify --amqp-timeout-seconds and

--tigerbeetle-timeout-seconds` (both default to 30

seconds), which define the maximum wait-time for a reply from the AMQP

server and the TigerBeetle cluster, respectively. Read more about the

CDC Job.

The TigerBeetle repair protocol kicks in when a replica is too far behind to catch up by replaying the write-ahead log (WAL). In such scenarios, the replica asks for data blocks from a recent checkpoint to process the operation log normally. This serves for recovering crashed replicas, and is also a key feature for surviving grey failures—where a replica can experience a transitory slowdown due to hardware or hypervisor failure.

We have improved the repair protocol by enforcing a per-replica budget of blocks that can be requested, preventing one lagging replica from slowing down the state sync process.

Besides the per-replica budget, we also introduced a dynamic jitter to the repair budget timeout to guard against situations where partitioned replicas can’t repair from each other.

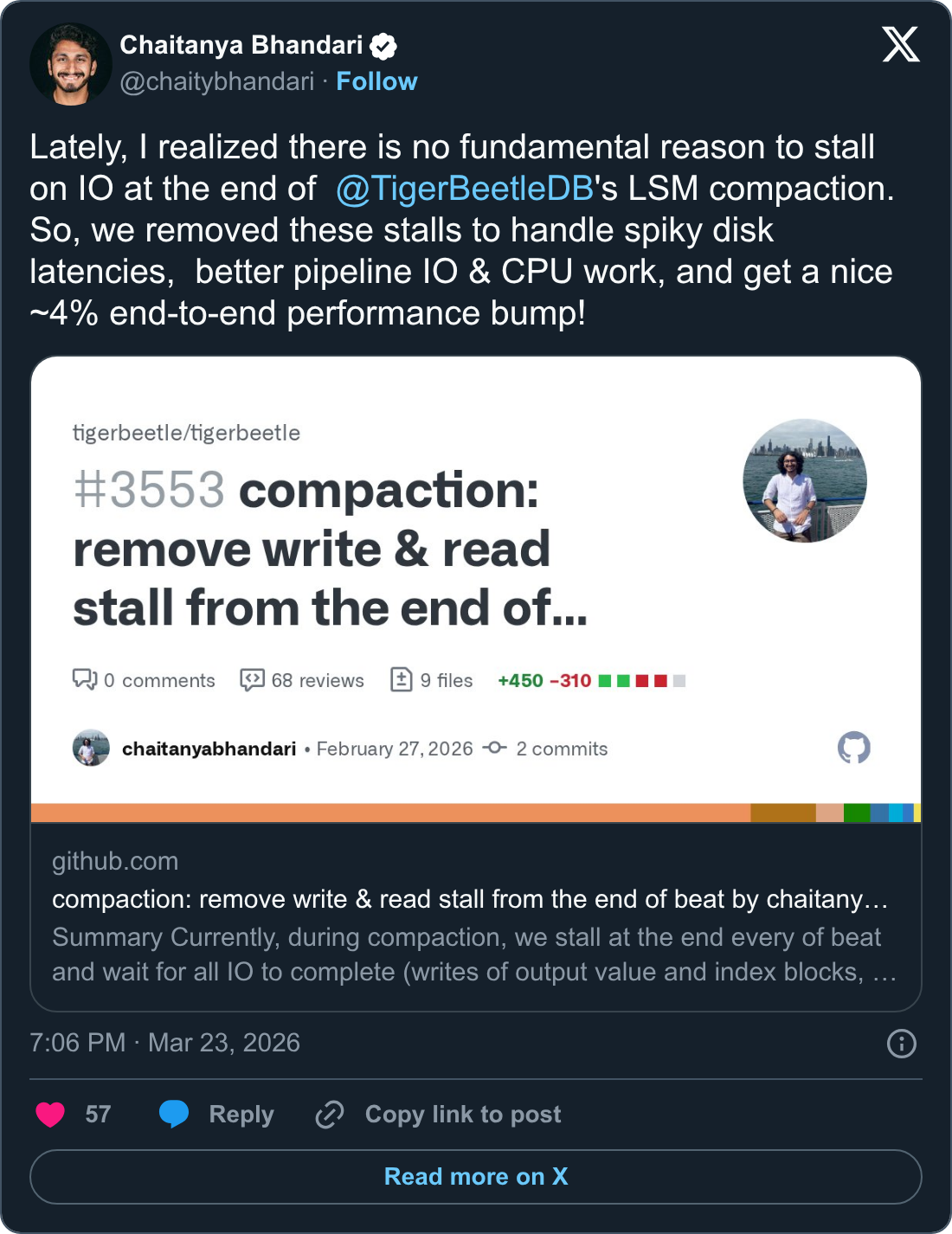

The TigerBeetle LSM Tree implementation achieves predictable latency because it splits the compaction work, doing the minimal amount of compaction required to ingest the next request. We improved throughput by initiating the I/O asynchronously and moving on when the database state is durable and the WAL can be freed (having to wait only at the checkpoint).

The CFO (Continuous Fuzzing Orchestrator) found a too-tight assertion that expected in-progress writes during checkpoints to be from repairing blocks—which is not true as it’s possible to have other writes going on, such as trailer blocks! This condition would manifest in specific scenarios where replicas would be able to saturate the maximum number of available IOPS.

Another performance improvement: the opportunity to serve reads from the write queue. Now, reads to a block that is currently being created or repaired can be promptly replied from the write queue!

Last month on IronBeetle, we covered TigerBeetle’s fault detection algorithm, which determines whether a replica is down or just laggy. We also started a new story arc about how TigerBeetle processes pending transfers that expire with a timeout, from the static memory allocation challenge of dealing with an unbounded amount of data, to how we scan expired transfers and how TigerBeetle uses VSR consensus to coordinate the execution of periodic tasks.

Join us live every Thursday at 5pm UTC on Twitch, YouTube and X!

Jonas Axboe Debuts the TigerBeetle Radical SR3 at Sebring,

March 6-8

Jens Axboe put Linux in the fast lane with io_uring. Last month, his son

Jonas took our shared need for speed to the track at Sebring for the

Radical Cup North America season opener… and finished

pole. We’re proud to partner and support Jonas at every turn!

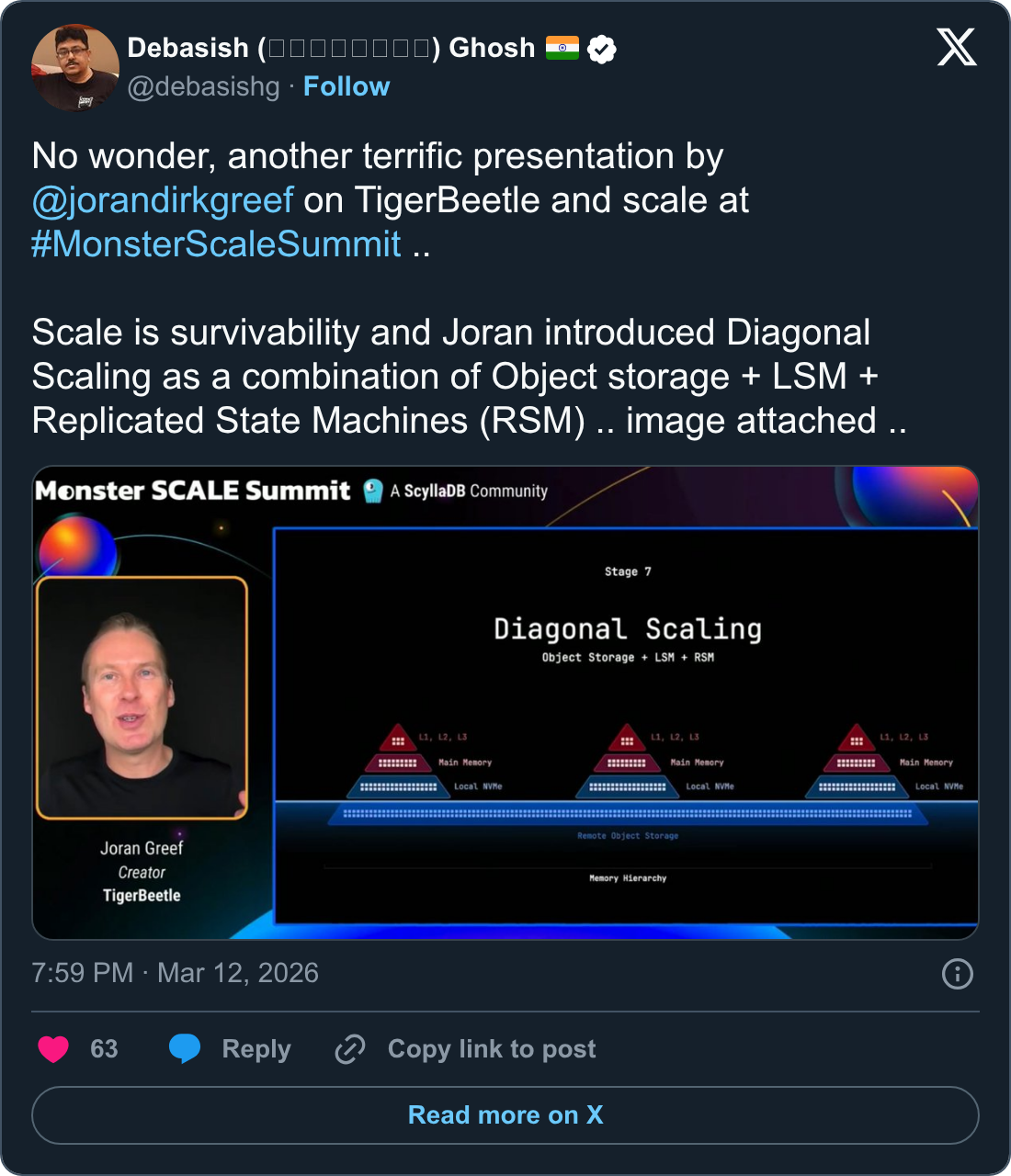

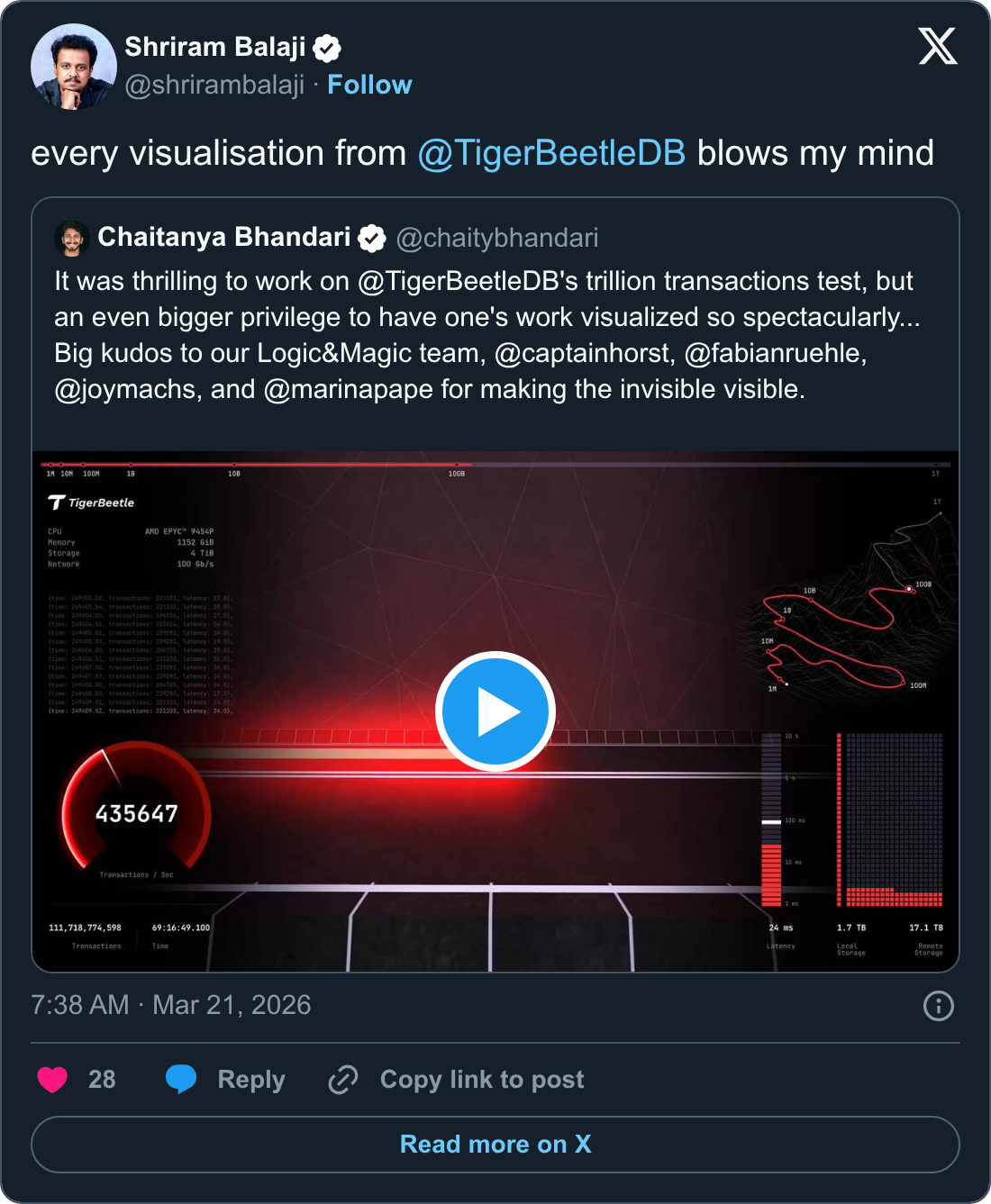

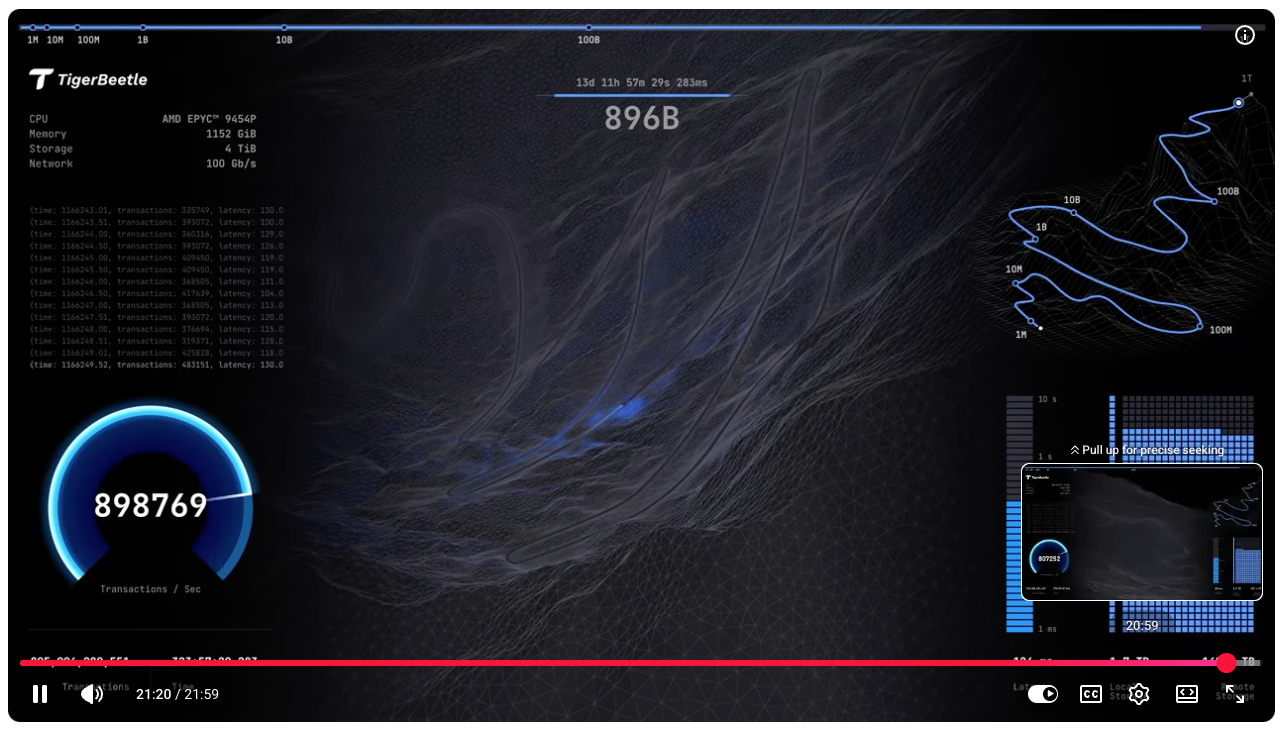

A Trillion Transactions with TigerBeetle, March

19

Scale is more than performance and distribution; scale is staying alive

when everything that can break does… even through a trillion

transactions. And so we processed a trillion transactions through

TigerBeetle. Read

the post, and watch TigerBeetle

process 1,000,000,000,000 transactions… to see the invisible made

visible!

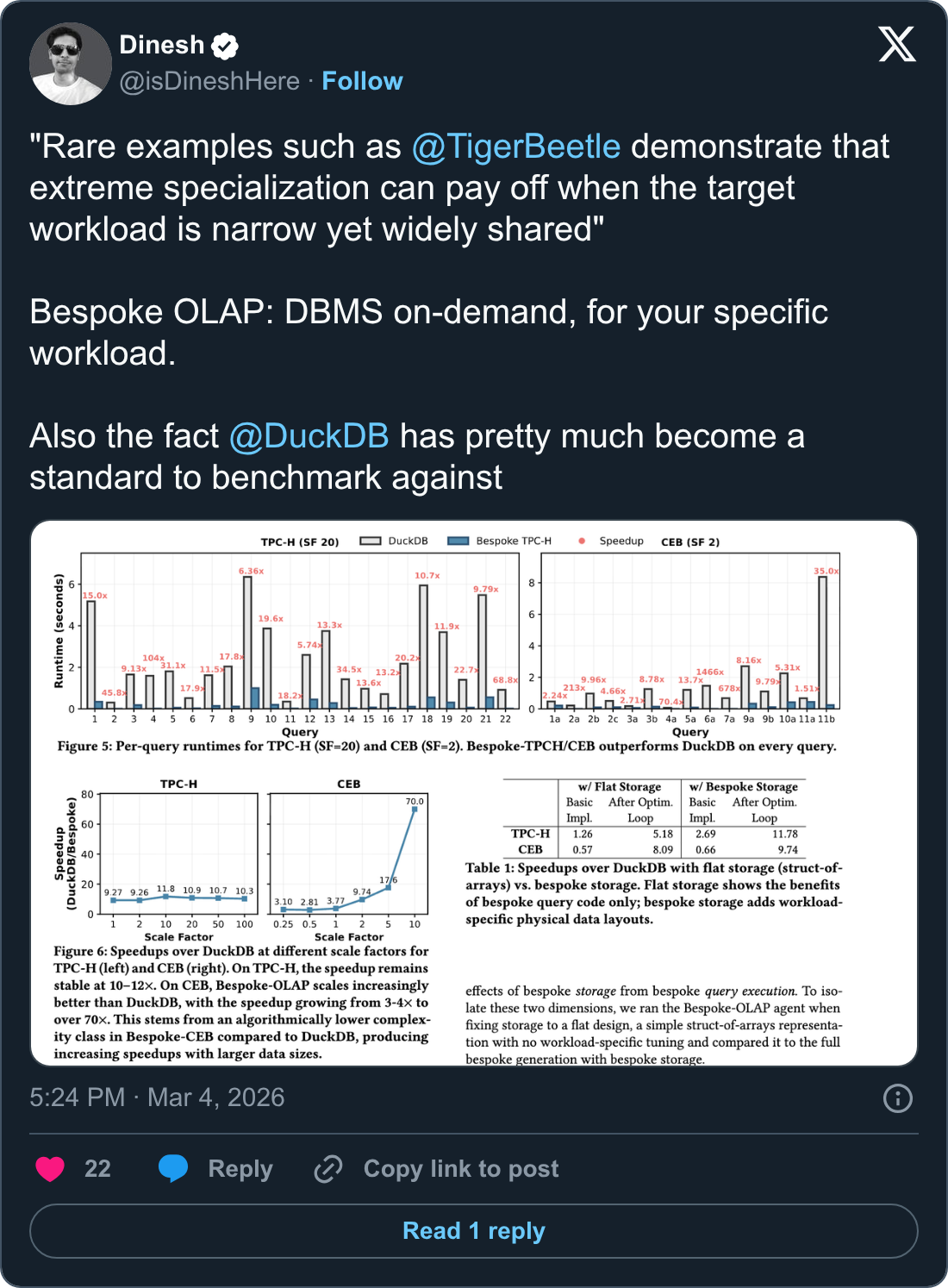

Linux 6.12 Through Linux 7.0 File-System Benchmarks For EXT4 + XFS, March 13 A surprising side effect of TigerBeetle’s predictable performance, it was nice to see TigerBeetle becoming an application standard for Linux filesystem benchmarks: “XFS gained the most out of TigerBeetle with the succeeding Linux kernel releases and closed the gap with EXT4.”

QCon London, March 16–18 Chaitanya curated QCon London’s Modern Performance Optimization track, which forayed into the High Luminosity Large Hadron Collider, vector search atop columnar layout of dimensions, async-await for scheduling computations, performance profiling, and auto-derived JIT compilers!

The Next Generation of Real-Time Payments Amsterdam, March 26 Ximedes hosted a breakout session at Future of Payments conference in Amsterdam, discussing the challenges faced by current global financial systems, and where DBMS technology is trending—including where TigerBeetle fits alongside general purpose solutions. Dankjewel, Joris, Maarten, and Tom!

A Tale of Four Fuzzers (November 2025 throwback!) Some time ago we overhauled TigerBeetle’s routing algorithm to better handle varying network topologies in a cluster, and ended up adding not one, not even two, but four very different new fuzzers to the system! Here’s why one fuzzer is not enough.

BugBash, April 23-24

Chaitanya will deliver a keynote on Protocol-Aware Deterministic

Simulation Testing at BugBash taking place at

The Eaton Hotel in DC. Systems with multiple, interacting nodes are

notoriously hard to get right, and in this talk, Chaitanya will share

how deterministic simulation can be used not just for debugging

correctness, but also for performance and building mental models.

Bravo!

Systems Distributed, July 27-28

Join us for the 4th Systems

Distributed, in Boston. A conference to teach systems programming

and systems thinking all the way across the stack, from systems

languages to databases to distributed systems. Tickets are now

available, with limited spots for the opening night party with

TigerBeetle team and SD26 speakers. Arrive early for Zig Day Boston on Sunday, July 26

to make friends, code and hone your skills with Andrew and Loris!

‘Till next time… Ramble on!

The TigerBeetle Team