We Put a Distributed Database In the Browser – And Made a Game of It!

TigerBeetle is a distributed financial transactions database, designed for mission critical safety. How do you test something so critical? Well, we could tell you (and we will), but why not show you?

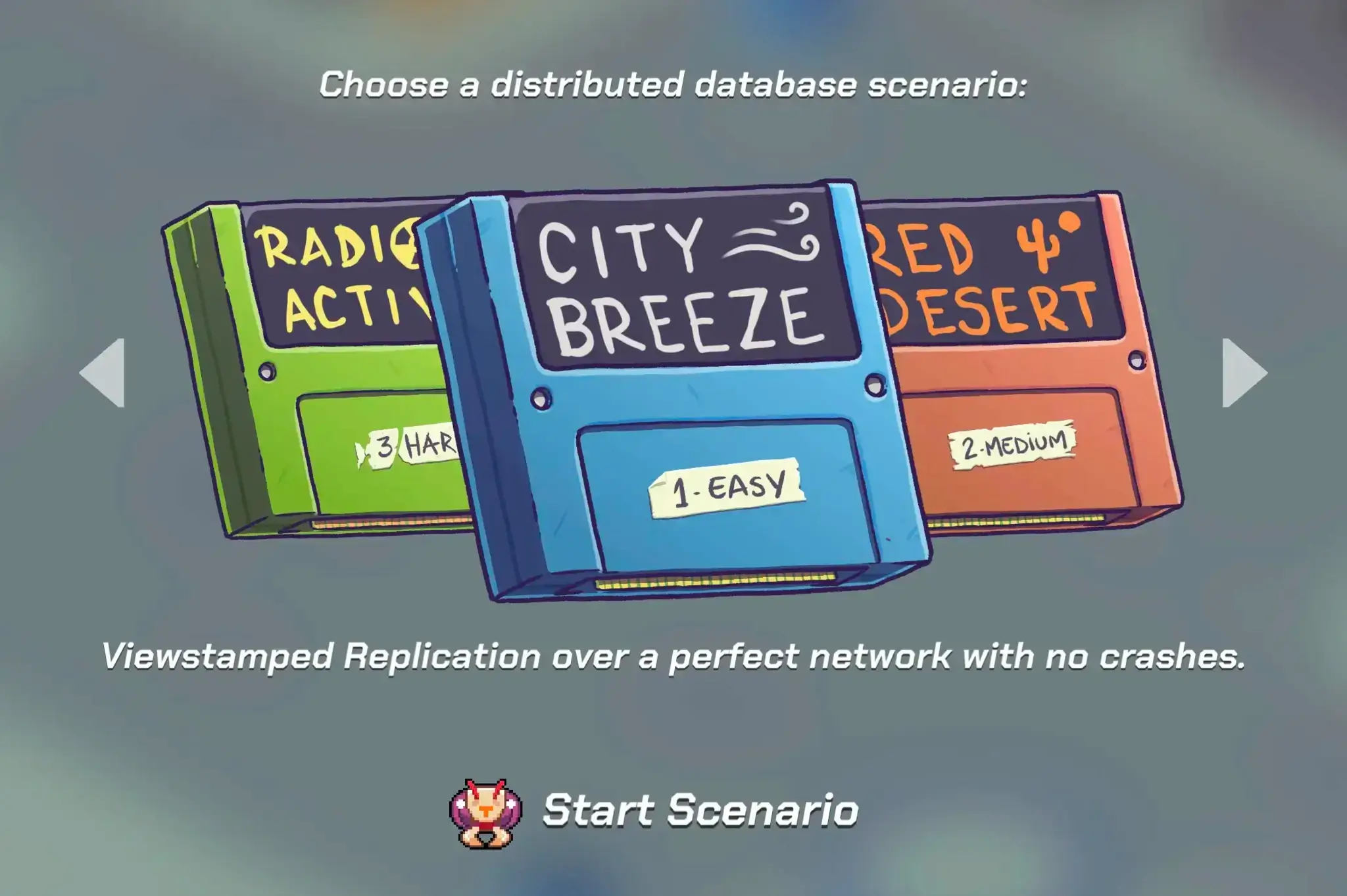

TLDR: You can now run TigerBeetle… compiled to WebAssembly… in your browser! With perfect network conditions, then not-so-perfect Jepsen’esque conditions, and finally, with unprecedented (cosmic) levels of disk corruption.

This is our take on Viewstamped Replication and Protocol-Aware Recovery for Consensus-Based Storage. It’s running in a deterministic simulator (that can speed up time and replay scenarios for debugging velocity), compiled to WebAssembly thanks to Zig’s toolchain. And a graphical gaming frontend on top, for the kid in all of us.

The Viewstamped Operation Replicator (VOPR) takes its name from the WOPR simulator in WarGames, and was inspired by our love of fuzzing, by Dropbox’s Nucleus testing, and by FoundationDB’s deterministic simulation testing.

The impetus behind the VOPR is that complex distributed systems bugs take time to find, and once found, might never be found again. Deterministic simulation testing solves this by combining a fuzzer with simulated time dilation and reproducible failures.

3.3 seconds of VOPR simulation gives you 39 minutes of real-world testing time. An hour gives you a month. A day gives you 2 years. And we run 10 of these VOPR simulators 24/7.

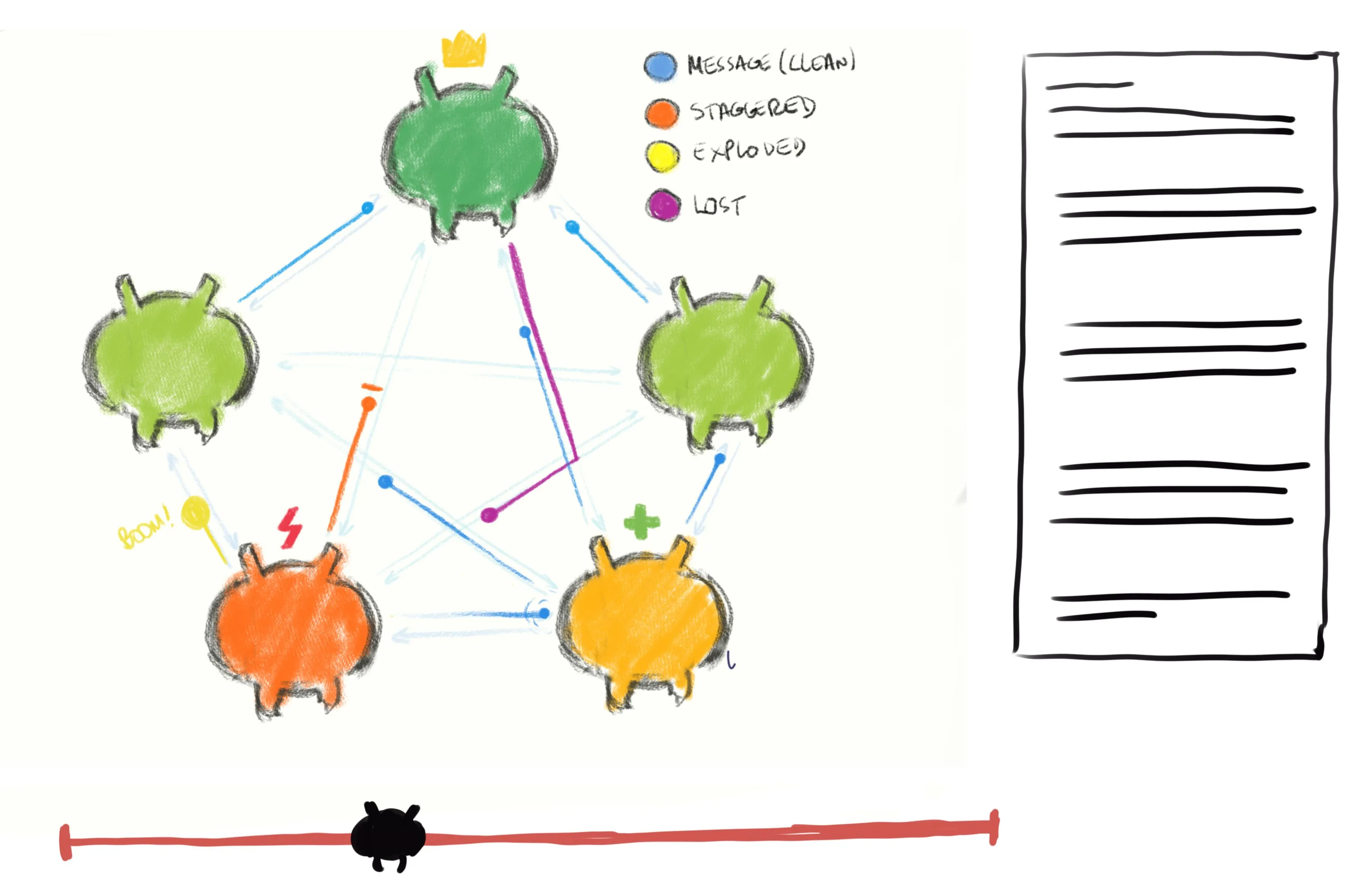

The VOPR fuzzes many clusters of TigerBeetle replicas and clients interacting through TigerBeetle’s Viewstamped Replication consensus protocol (VSR), all in a single process on a developer’s machine. All I/O is mocked out: with a network simulator to simulate all kinds of network faults and latencies, and an in-memory storage simulator to simulate all kinds of storage faults and latencies.

The VOPR can control the degree to which faults are applied, the kinds of faults that are applied, and verify linearizability. And the simulator, with varying scenarios, is what you’re now seeing in your browser!

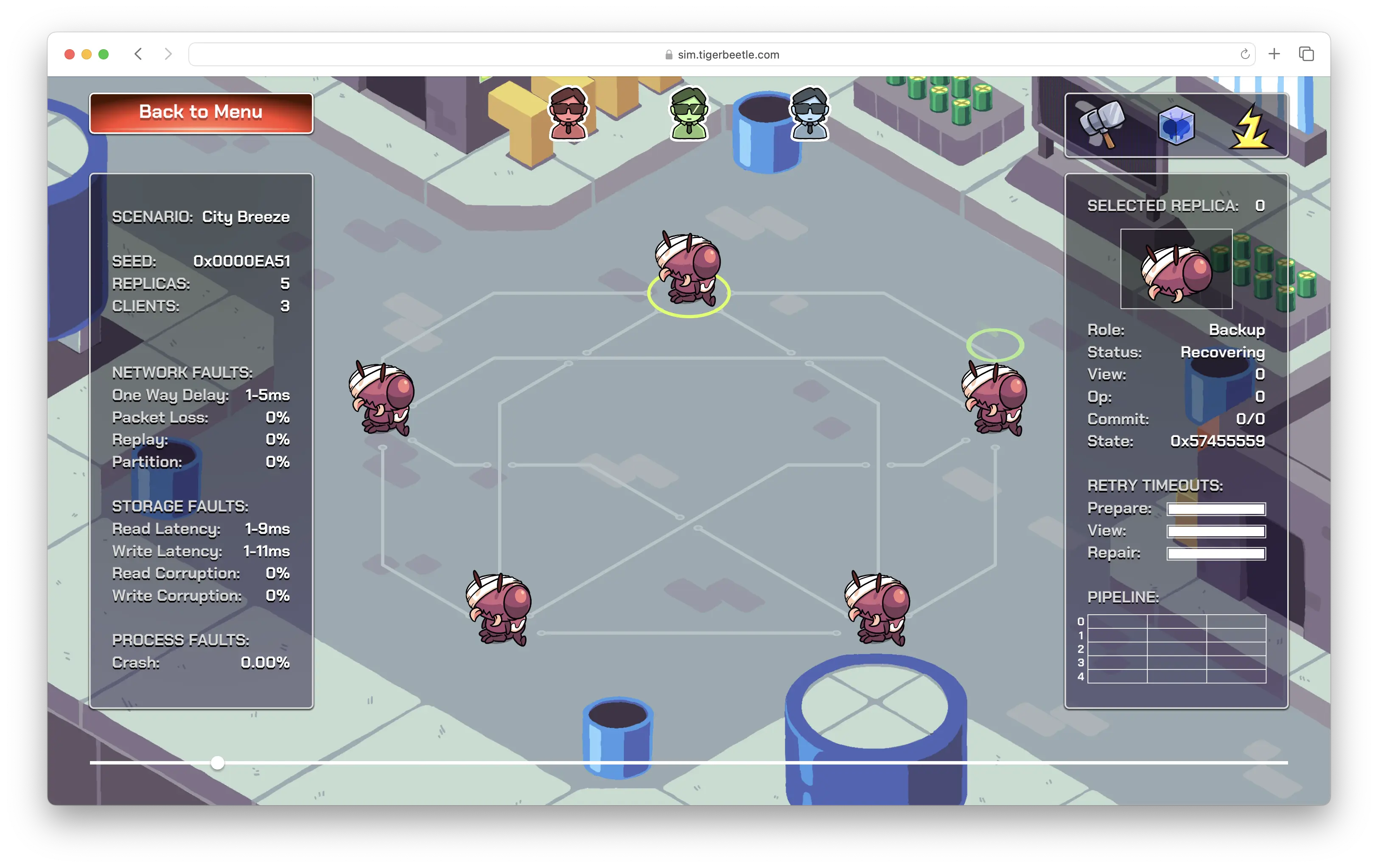

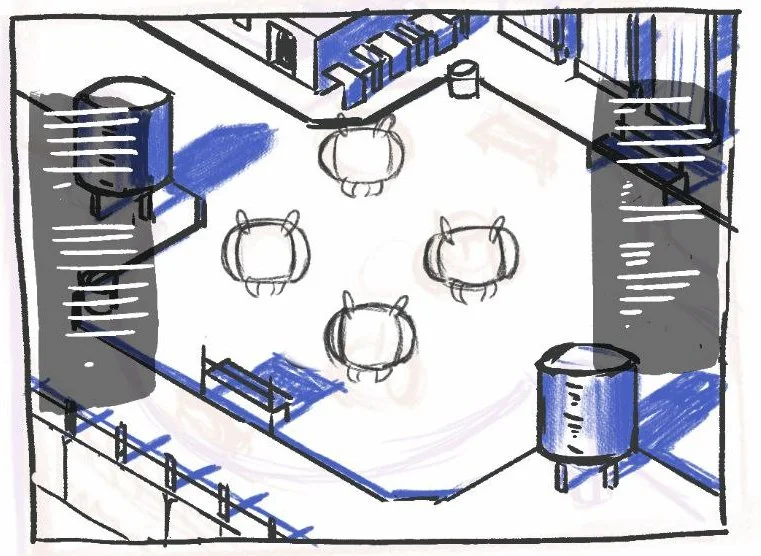

In City Breeze, we simulate a perfect environment. No network partitions. No packet loss or replays. No crashes or disk corruption, and, of course, low latency network and disk I/O.

Once the cluster has started up, clients (at the top of the screen) send new messages to the primary. The primary replicates these messages to the backup replicas (for durability), before replying back to the clients.

You’ll see each replica’s op number (a serial identifier for each message) increase as messages are replicated to that replica, and the commit number increase as replicated messages are then executed through the business logic.

This is replication at its most optimal (and most intuitive). At least, if for safety you require data to be on more than one machine before committing a transaction with strict serializability.

In such an easy scenario, you’re unlikely to see a view change (where the cluster has to agree on a new replica as primary). That is, replica 0 will be primary and will likely stay primary the whole time.

Again, this is real TigerBeetle code, running a cluster of ‘Beetles… in your browser!

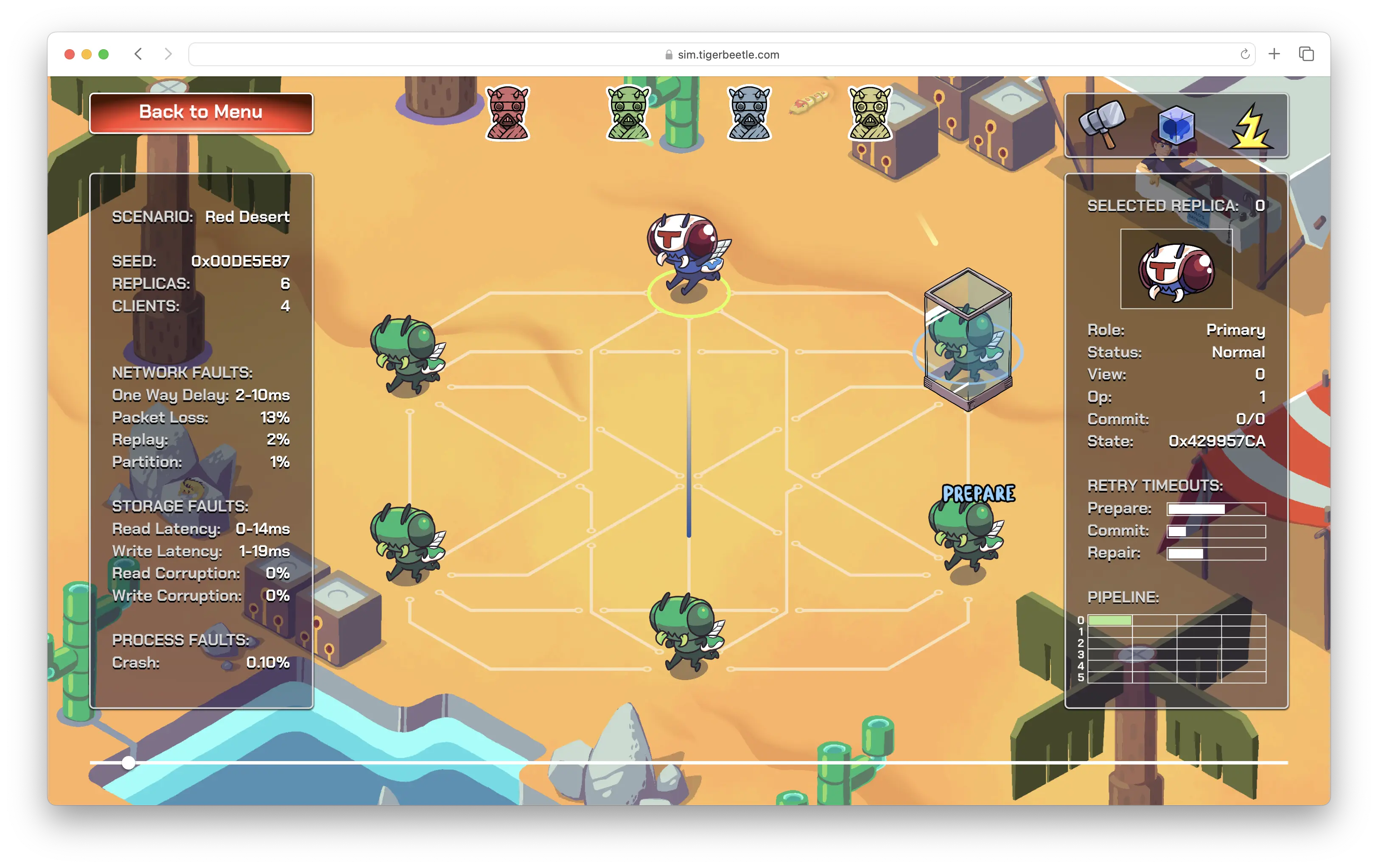

In the second level, things get real. We’re talking Jepsen-level nemeses.

We introduce high storage and network latency, process crashes, and a flaky network, but no disk corruption.

You’re going to see view changes when the primary crashes or is partitioned, and VSR’s telltale round robin rotation of the new primary among replicas, until a new primary is established. This is in contrast to Raft, which elects a primary at random, but then suffers from the risk of (or increased latency to mitigate) dueling leaders.

The committed op number will increase more slowly, as it takes the cluster longer to replicate messages in this more challenging environment—but that’s fine, because you can’t get much worse than this?

Sure, we’re not yet injecting storage faults, but then formal proofs for protocols like Raft and Paxos assume that disks are perfect, and depend on this for correctness? After all, you can always run your database over RAID, right? Right?

If your distributed database was designed before 2018, you probably couldn’t have done much. The research didn’t exist.

Who on earth is that professor with an eye patch in a bathtub?

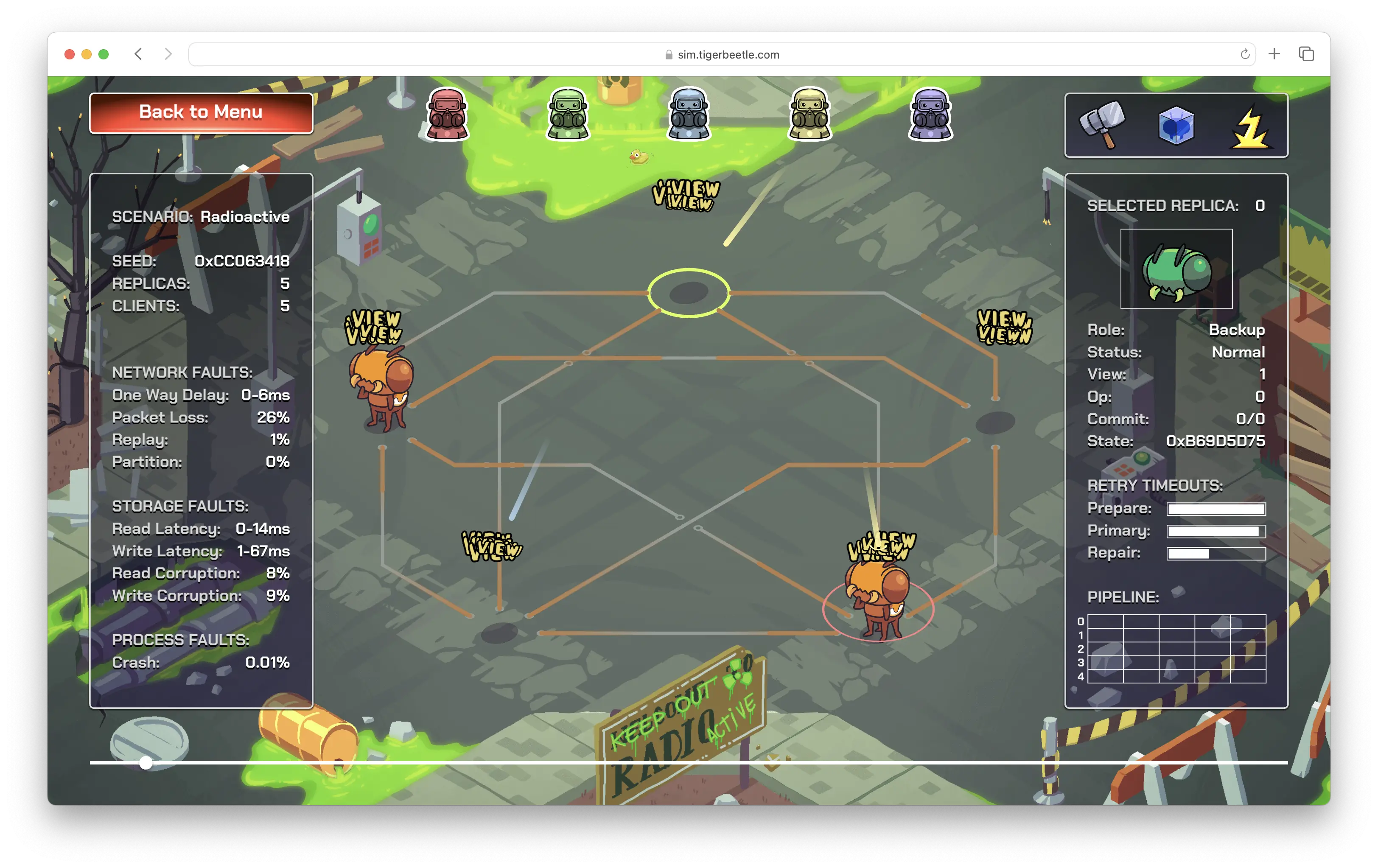

The final level of SimTigerBeetle simulates catastrophic failure. Not just network and process faults but up to 8% corruption probability on the storage read path, and 9% corruption probability on the storage write path—for each replica! Again, this is something Jepsen (and most databases in general) don’t fully test for (or recover from yet).

And this is astronomic failure. If a disk were corrupting 8% of data, you’d throw it away immediately. But this is a scenario TigerBeetle actually handles and recovers from.

With the VOPR, we’ve been able to explore and test TigerBeetle against huge state spaces in a short amount of time, by literally speeding up the passing of time within the simulation itself. Just like the Matrix.

The VOPR is only one of the ways we test TigerBeetle. But it is the biggest reason we’ve been able to build confidence in our system in such a short time.

We started this project in July 2022, with Joran pitching a wireframe of the idea to illustrator Joy Machs and game developer Fabio Arnold. We wanted an interactive demo to explain how TigerBeetle works. Simple enough for a child to watch, understand and get excited about.

Although Zig compiles easily to WebAssembly, WebAssembly is a 32-bit architecture and TigerBeetle supports only 64-bit architectures. Getting the simulator’s memory usage below 2 GiB (for the whole cluster!) was one of the challenges Fabio worked through to get this running in the browser.

The graphics are rendered in WebGL with Fabio’s port of nanovg to Zig.

Joy Machs has been illustrating for the Zig communities since 2021, beginning with the mascot, Suzie. And he’s been working with TigerBeetle, since that same year, to tell our story through all the artwork you see

TigerBeetle takes cues from LMAX by Martin Thompson; storage fault research by Remzi and Andrea Arpaci-Dusseau at UW-Madison with Ramnatthan Alagappan and Aishwarya Ganesan; Viewstamped Replication by Brian M. Oki, Barbara Liskov, and James Cowling; and many others (check out the full collection of papers behind TigerBeetle).

Finally, for all of you, who have inspired and encouraged us along the way—this is your game.

Can’t play the game? Let us know! In the meantime, we’ve got you covered — watch our recording of the game in action!